Many camera manufacturers offer a variety of compression technologies to choose from. Usually, there is at least one Intra-frame compression (MJPEG format) option and one Inter-frame compression option (H264 format). With these various choices, you may be confused about which one to use.

Is it better to use H264 or MJPEG? In this article, I will discuss everything that you need to know about MJPEG and H264.

What Exactly Is The Distinction Between MJPEG and H264?

H.264 and MJPEG codec vary in that MJPEG encodes video keyframes while H.264 compresses across frames. Each video frame is compressed individually in MJPEG, much as if you were manually compressing a series of JPEG photos.

Some frames in H.264 condense on their own (called an I or initialization frame), but the majority of frames just record changes from the preceding frame (called P or progressive frames). Compared to MJPEG, which re-encodes each frame, this can save a lot of bandwidth. (1)

How They Affect Surveillance Camera Monitoring

It is critical to understand the complexity of your camera regardless of whether you use MJPEG or H.264. It is even more crucial to understand all these when using H.264. This is because the modification in bit rates for H.264 is more significant than for the MJPEG codec (although in absolute numbers for our tests, H.264 bandwidth utilization was always lower at any complexity).

Visual complexity implies how much activity is happening in the source video you are capturing. A person speaking in front of a white wall, for example, is far less ‘complex’ than a densely populated venue. Overall, the more color, shapes, sizes, objects, and movements there are in a scene, the more complex it is.

H.264’s bit rate benefits are amplified with less complex scenes because H.264’s capabilities to compress across frames are optimized.

MJPEG format, on the other hand, does not compress across frames, so it gains less from less complex scenes.

However, because MJPEG must compress individual images and more complex images still involve more bandwidth, MJPEG bandwidth demands increase more decently than H.264. It is a fallacy to assume that MJPEG’s bandwidth mandates are constant or that stream size does not vary with complexity.

Many manufacturers configure their MJPEG streams to use fixed image sizes, creating the impression that MJPEG is necessarily fixed. They can do so safely because the variation in bandwidth size due to complexity is relatively small for MJPEG. However, this reveals a minor level of quality loss (or bandwidth inefficiency).

How to Benefit from Both MJPEG and H264

A single monitoring device can sustain both H.264 and MJPEG streams simultaneously. Cameras and encoders with simultaneous H.264 and MJPEG functionality are accessible, although this required processing power is higher than single-mode devices.

The video compression standard is chosen based on the desired transmission bandwidth, storage capacity, and quality parameters; the MJPEG compression format encodes each video frame individually. It produces higher image quality and more statistics while necessitating more significant bandwidth requirements.

However, many still use it in IP cameras due to its high quality in a wide range of environments and applications. H.264 is a more recent compression standard. When compared to MJPEG, this video encoding engine significantly reduces bandwidth requirements without sacrificing quality. H.264 techniques are advanced, necessitating more sophisticated hardware.

Overall, you can take advantage of using both MJPEG and H264 by using them wisely. I highly recommend using H264 in crowded areas. If you want a better and higher recording quality where you want to capture important details, this is the perfect time to use MJPEG.

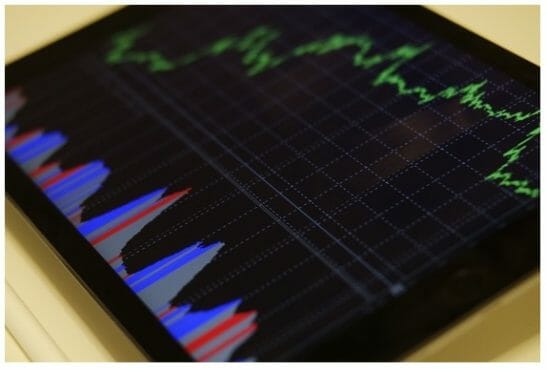

Comparing MJPEG and H264 in Codecs and Bitrates

| Codec | Bitrate (Mbps) | Average PSNR |

| MJPEG | 500Mbps | 41.831248 |

| H.264 | 500Mbps ALL-I | 43.983679 |

| H.264 | 500Mbps IPB | 54.742351 |

Under test conditions, H.264 and MJPEG are reasonably comparable. Although MJPEG lags behind H.264, the differences aren’t excessive.

At 500 Mbps, the SSIM and PSRN averages differ by only 0.3 percent and 5%, respectively. Both have PSNRs above 40 and SSIMs above 0.98. However, H.264’s more advanced processing puts it ahead, with significantly better minimum PSNRs (43.1 to 32.5) and higher maximum PSNRs (57.7 to 45.8).

That said, it’s not like you can cut the bitrate in half and keep the averages the same with H.264. When you reduce H.264 to 250 Mbps, ALL-I, 4:2:2, the PSNR average was 37.5, and the SSIM was.954.

ALL-I and MJPEG are essentially similar in that they both store all of the frames as entire images. Since H.264 has more advanced spatial encoding options (such as variable-sized blocks), it is marginally ahead.

When you enable interframe compression options — that is, an IPB mode — H.264 really shines. Since frames are no longer self-contained, interframe compression drastically reduces the number of bits required to represent the majority of them.

MJPEG vs H264: Pros & Cons

MJPEG

Motion JPEG, also known as M-JPEG, is a video codec compression format based on the popular JPEG compression configuration used for still images. Incorporating several still JPEG images creates a video sequence and gives the appearance of motion.

MJPEG is a high-quality video compression format. Because each video frame is distinct, the rest of the footage remains unaffected if one is lost. This robustness, however, means that more bandwidth is required, and more storage space is required. (2)

Pros

- Ideal for Mega-Pixel Cameras

- Improved Image Quality (clear individual images)

- Adaptability (fast image stream recovery in the event of packet loss)

- Interoperability (access to industry-standard compression/decompression on all PCs)

- Decompression on the PC is preferable (more video streams on PC)

- A substantial majority of cameras are supported.

- Reduced Latency (better live viewing and more responsive PTZ control)

- Degradation with grace (reduced bandwidth does not reduce image quality)

- Image quality that is consistent (quality remains constant regardless of image complexity)

- Capable of detecting live motion and analyzing images

- Low level of complexity (for images searches and manipulation)

- Up to 30 frames per second can be displayed and recorded

Cons

- Extensive use of bandwidth (at frame rates above 10 FPS)

- Storage requirements are quite high (at frame rates above 10 FPS)

- There is no functionality for synchronized sound

H264

H264, commonly known as MPEG4 Part 10/AVC (Advanced video coding), is the most recent MPEG video codec compressing standard. It is the leading video encoding technology as of 2010 since it can compress video significantly without sacrificing video quality. It offers up to an 80% reduction in file size compared to M-JPEG and a 50% reduction compared to MPEG4.

H264 is defined collaboratively by two standards groups: the ITU-Video T’s Coding Experts Group from the telecommunications sector and the ISO/IEC Moving Picture Experts Group from the information technology sector. With this support, H264 is projected to outperform the other standards in regard to development.

Pros

- Increased compression rates (above 10 FPS)

- Reduced storage needs (at 10 FPS or higher)

- Can keep a constant bit rate (CBR)

- Excellent Streaming Protocol (designed for real-time viewing)

- Audio and Video Synchronization (for live and recorded streams)

- Up to 30 frames per second can be displayed and recorded.

Cons

- Many cameras have storage difficulties (fragmentation)

- The rate of decompression on the PC is exceptionally high (fewer video streams)

- Lack of toughness (can lose video if bandwidth drops)

- Increased latency (delayed live viewing and sluggish PTZ control)

You may want to check below other learning and product guides that might help you in the future. Until our next article!

- How to hide a security camera

- Blink mini review

- How many frames per second is good for a security camera

References

(1) bandwidth – https://www.verizon.com/info/definitions/bandwidth/

(2) storage space – https://study.com/academy/lesson/what-is-online-data-storage.html